'Terrifying' app 'that can remove women's clothes' and create fake nudes is banned

A new smartphone app that can ‘remove women’s clothes’ based on clothed pictures has been taken offline.

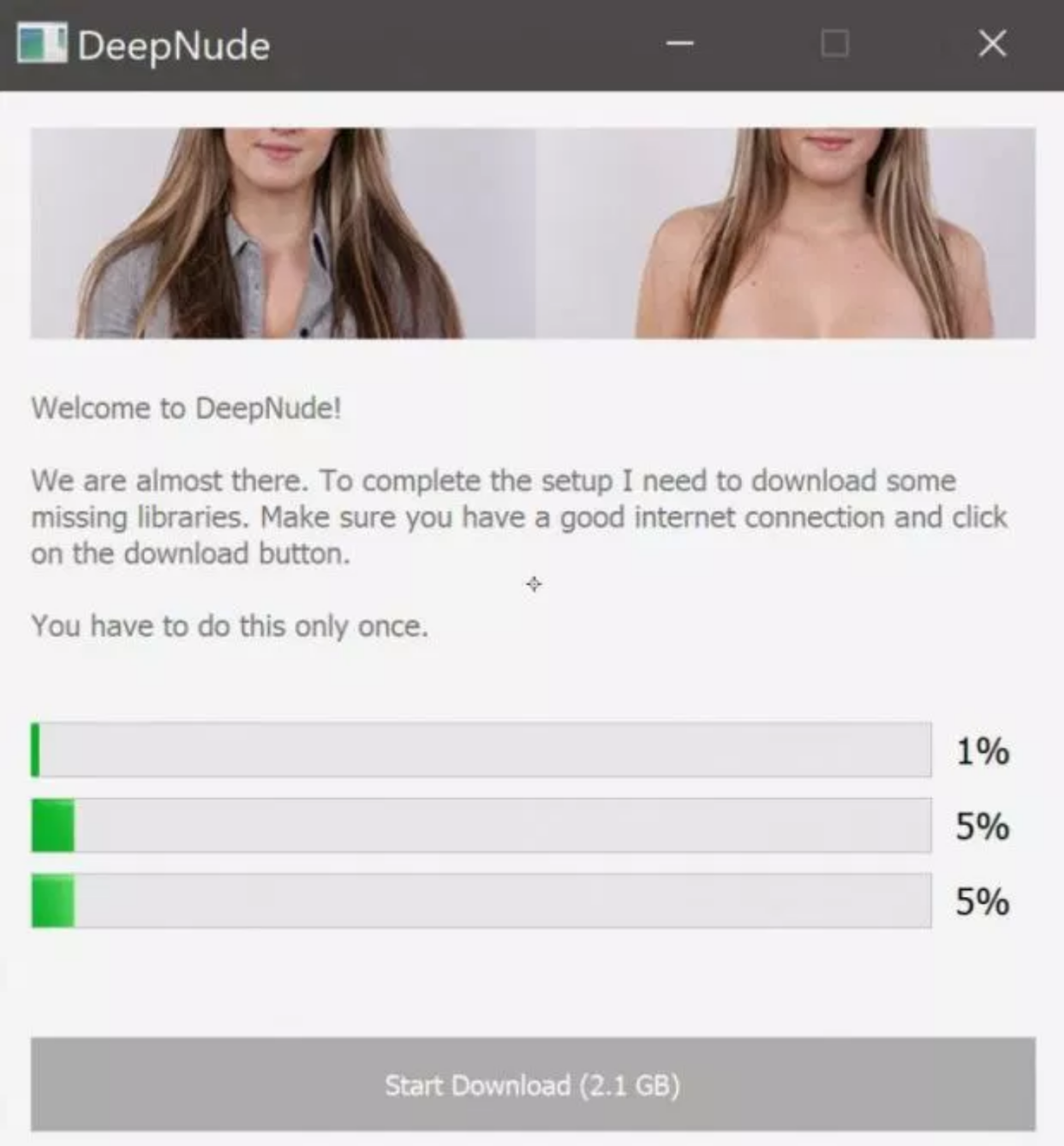

DeepNude was available to download for free from March and used AI to create realistic nudes.

The banned programme used technology similar to that used to create so-called ‘deepfake’ videos, which manipulate moving image to produce convincingly realistic clips.

Users were then required to pay $50 (£39) to remove a large watermark that appeared over the generated nude image and export this result.

DeepNude said it took the app offline because it couldn't cope with high numbers of traffic.

However, the company later tweeted: "Here is the brief history, and the end of DeepNude. We created this project for user's entertainment a few months ago.

“We thought we were selling a few sales every month in a controlled manner.

"Honestly, the app is not that great, it only works with particular photos. We never thought it would become viral and we would not be able to control the traffic."

Read more on Yahoo News UK:

German police pull over naked man on moped who says ‘it’s too hot’

'Obsessed sexual predator' attacked women after release from prison

Latest London teen stabbing victim killed as he went to shop to buy milk

The company will not release new versions of the app and will refund premium customers.

A spokesperson for DeepNude also tweeted the app would be “misused” and wanted to distance itself from selling the programme.

The tweet read: "Despite the safety measures adopted (watermarks) if 500,000 people use it, the probability that people will misuse it is too high.

"We don't want to make money this way. Surely some copies of DeepNude will be shared on the web, but we don't want to be the ones who sell it."

There would concerns the app could be used to create quick revenge porn and that it was used without consent.

Katelyn Bowden, founder and CEO of revenge porn activism group Badass, told Motherboard: "This is absolutely terrifying.

“Now anyone could find themselves a victim of revenge porn, without ever having taken a nude photo.

“This tech should not be available to the public”